Rogerio Feris, Ramesh Raskar, Longbin Chen, Karhan Tan, Matthew Turk Published in IEEE International Conference in Computer Vision [Paper PDF | Slides PPT |Datasets | Source Code] |

AbstractCurrently, sharp discontinuities in depth and occlusions in

multiview imaging systems pose serious challenges for many dense

correspondence algorithms. However, it is important for 3D

reconstruction methods to preserve depth edges as they correspond to

important shape features like silhouettes which are critical for

understanding the structure of a scene. In this paper we show how active illumination

algorithms can produce a rich set of feature maps that

are useful in dense 3D reconstruction.

We start by showing how to compute a qualitative depth map from a single camera, which encodes object

relative distances, being a useful prior for stereo. In a multiview setup, we show that along with depth edges,

binocular half-occluded pixels can also be explicitly and reliably labeled.

To demonstrate the

usefulness of these feature maps, we show how they can be used in |

||||||||||||||||||||

|

||||||||||||||||||||

|

||||||||||||||||||||

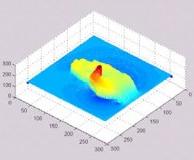

Generating the Normal MapWe used our NPR output obtained with multi-flash imaging as a height field to create a normal map. The main advantage of our approach is that the NPR image captures bumpy features such as hair, wrinkles, and beard, allowing us to create fine-detail 3D models and NPR illustrations automatically. In order to create the normal map, we first negate the NPR texture image so that darker regions of the height field are lower and lighter regions are higher. Then, we compute the normals based on partial derivatives of the height field surface, exactly as demonstrated in the CG book [2], page 203. Tangent-space Bump MappingSince we are dealing with arbitrary geometry, we need to align the coordinate system of the normal vectors in the normal map (tangent space) with the light vectors coordinate system. This is done by creating a rotation matrix for each vertex with columns specified by the correspondent normal, binormal, and tangent vectors. See CG book [2], page 225, for how to compute these vectors. We transformed both the light vector and the normal map vectors in the same consistent eye-space coordinate system. Results

|

||||||||||||||||||||

|

||||||||||||||||||||

Multi-Flash Stereo DatasetsWe encourage researchers to develop novel methods for stereo that take into account small baseline multi-flash illumination. We are currently collecting new datasets with different camera-flash configurations. Tripod Scene (tripod.zip 4.7MB) - Challenging scene with ambiguous patterns, textureless regions, thin structures and a geometrically complex object

|

||||||||||||||||||||

Phase FunctionsWhen modeling scattering within the layer, we can use phase functions to describe the result of light interacting with particles in the layer. A phase function describes the scattered distribution of light after a ray hits a particle in the layer. We use Henyey-Greenstein phase function (see [3], page 55), which depends on the incident and outgoing ray, and takes an asymmetric parameter g, that ranges from -1 to 1, spanning the range of strong retro-reflection to strong forward scattering. The phase function is used to determine the BRDF that describes single scattering from a medium (see [3], page 56), along with scattering albedo. Multiple scattering is empirically approximated by adding together three single scattering terms, with different values for the asymmetric parameter g. Fresnel EffectWe need to consider the Fresnel effect, which happens when the light ray enters and exits the surface. This is important to determine the incoming and outgoing directions (and also intensity) of the light rays inside the medium, so that the BRDF/scattering is properly computed. Results

From left to right: subsurface scattering with mostly backscattering (note the glow effect), same as before with bump mapping, subsurface scattering with mostly forward scattering.

|